What Color Is That House?

How to be wrong a lot in one easy step: Always believe your eyes.

Let me be the first person in history to point out that things aren’t always as they seem. (Sometimes they are though — the buildings in the reflection aren’t warped, but the glass reflecting them is.) Image courtesy of Flickr user katsrkool.

We’ve all been there. Well, most of us have been there. OK: I’ve been there. (That’s me trying to be more like the Fair Witnesses discussed below.)

You know, that awkward moment when you realize you just accidentally said something insensitive and want to make amends: “Oh! I’m so sorry! I didn’t know. Please forgive me.”

It’s not a good feeling, but it’s part of life. The thing is, after one mistake of this type, there’s no excuse for not forever trying to avoid doing it again. That might not be 100% possible. But it’s always a good idea to think: Wait, what might I not know in this situation? Because the answer is always: a whole lot.

In Stranger in a Strange Land, Robert Heinlein’s novel about a human’s arrival on Earth after having been reared by aliens, there’s one idea that always stuck with me. In the world of the story there is a select group of people known as “Fair Witnesses.” Fair Witnesses are wholly impartial and incorruptible arbiters who are relied upon to provide precise observations in legal disputes.

Not just anyone can become a Fair Witness. They tend to be a tad eccentric.

A minor scene early in the book helps to explain the nature and qualities inherent to Fair Witnesses:

“Anne!”

Anne was on the springboard; she turned her head. Jubal called out, “That house on the hilltop — can you see what color they’ve painted it?”

Anne looked, then answered, “It’s white on this side.”

Jubal went on to Jill, “You see? It doesn’t occur to her to infer that the other side is probably white, too. All the King’s horses couldn’t force her to commit herself . . . unless she went there and looked — and even then she wouldn’t assume that it stayed whatever color it might be after she left.”

“Anne is a Fair Witness?”

“Graduate, unlimited license, and admitted to testify before the High Court.”

Jubal chose a mundane question to underscore just how anomalous the cognitive processes of a Fair Witness are. He’s kind of poking fun at the extreme lengths they go to in order to maintain their pure objectivity, but the lesson is valuable just the same: We are constantly presented with only a partial view of the “house,” whether it be an actual physical structure or a political article or a story about a colleague. We are constantly inferring details and facts that we don’t — and can’t — know. It’s impossible to operate in the world without inferring stuff, but rarely will it prove harmful to stop and consider: What part of this am I unable to see? Can I trust my own assumptions? What agenda does this person have? What might this person be going through that I couldn’t possibly know about?

Because our brains are constantly playing tricks on us, there is a disconcerting amount of similar points to keep in mind: logical fallacies in rhetoric, seemingly counterintuitive (at first) laws of economics, elements of statistics, even straightforward dictionary entries. Precision of thought, accuracy in language, unbiased reasoning: we all imagine we are above average in these qualities, but even if that were mathematically possible, how good is “above average” really when we’re talking about flawed human beings? The list of our mistakes is long because nobody’s perfect. But we do have one thing going for us: we always strive to be better.

Can You Handle the Truth?

Every year, Edge.org comes up with a Big Question, then poses it to leading intellectuals and posts their answers. It’s probably weird to have a favorite Edge question, but I do have one: it’s the one from 2011. It’s not so much the question but that the answers were so varied and consistently fascinating that year.

The question was: What scientific concept would improve everybody’s cognitive toolkit? The respondents each picked some scientific concept — a shorthand abstraction, or “SHA” — that can improve one’s reasoning ability.

I highly recommend spending some time with that year’s answers because there are so many good ones. (That includes the very first response, which comes from author, psychology professor, and Nobel Prize–winning economist Daniel Kahneman: “The Focusing Illusion,” i.e., “Nothing In Life Is As Important As You Think It Is, While You Are Thinking About It,” a concept I try to keep to the fore and have addressed a bit in this space. And the most beautifully named concept might be Clifford Pickover’s Kaleidoscopic Discovery Engine, which explains how different people often independently arrive at the same innovative idea.)

But along the lines of that Heinlein snippet above, let’s focus on the answer from Richard Nisbett, author and professor of psychology at the University of Michigan. The concept he said would be most useful is “Graceful SHAs.”

Here are two of Dr. Nisbett’s examples of what a “graceful” shorthand abstraction looks like. He poses them as questions, and the answers reveal some of our cognitive biases and illogical reasoning. See how you do:

Question: David L., a high school senior, is choosing between two colleges, equal in prestige, cost and distance from home. David has friends at both colleges. Those at College A like it from both intellectual and personal standpoints. Those at College B are generally disappointed on both grounds. But David visits each college for a day and his impressions are quite different from those of his friends. He meets several students at College A who seem neither particularly interesting nor particularly pleasant, and a couple of professors give him the brushoff. He meets several bright and pleasant students at College B and two different professors take a personal interest in him. Question: Which college do you think David should go to?

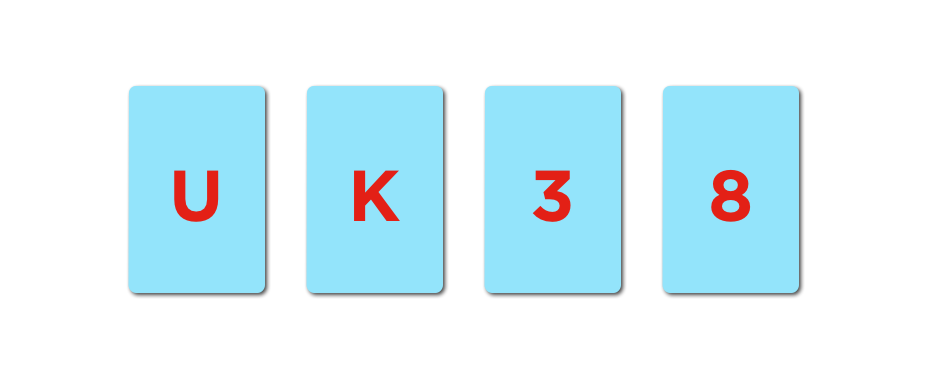

In another question, Dr. Nisbett proposes the following: In front of you there is a stack of four cards. Each card has a single letter (either a consonant or a vowel) on its front face, while its reverse side shows either an even or an odd whole number. Got it?

Question: Which card or cards below must you turn over to determine whether the following rule has been violated or not? “Rule: If there is a vowel on the front of the card, then there is an odd number on the back.”

Here are the answers:

Dr. Nisbett’s Answer to Question 1:

“If you said that ‘David is not his friends; he should go to the place he likes,’ then the SHA of ‘the law of large numbers’ [LLN] has not been sufficiently salient to you. David has one day’s worth of experiences at each; his friends have hundreds. Unless David thinks his friends have kinky tastes he should ignore his own impressions and go to College A. A single college course in statistics increases the likelihood of invoking LLN. Several courses in statistics make LLN considerations almost inevitable.”

Dr. Nisbett’s Answer to Question 2:

“If you said anything other than ‘turn over the U and turn over the 8,’ psychologists Wason and Johnson-Laird have shown that you would be in the company of 90% of Oxford students. [Most people say the U and the 3, or just the U.] Unfortunately, you — and they — are wrong. The SHA of the logic of the conditional has not guided your answer. ‘If P then Q is satisfied by showing that the P is associated with a Q and that the not-Q is not associated with a P.’ A course in logic actually does nothing to make people better able to answer questions such as [this]. Indeed, a Ph.D. in philosophy does nothing to make people better able to apply the logic of the conditional to simple problems like [this] or meatier problems of the kind one encounters in everyday life.

The formal rules of symbolic logic alluded to in that second answer might not seem likely to come in handy very often, but it’s more an exercise in rigorous thinking, which we all could use a little exercise in. The law of large numbers, on the other hand, will surface rather frequently once you’re looking out for it. (Of course, that could be an illusion: the Baader-Meinhoff Phenomenon, aka the Frequency Illusion, to be exact.)

What errors in thinking — logical fallacies, cognitive biases — do you fall back on most often? Naturally, Wikipedia has some handy lists to peruse if you want to take a long hard look in the mirror (or be on the lookout for chances to call out your friends, which they will always 100% thank you for and never find annoying, promise):

98 Decision-making, Belief, and Behavioral Biases

Comprehensive (yeah, I lost count) List of Logical Fallacies

In the spirit of good faith, here are some I know I’ve succumbed to: Choice-Supportive Bias, or “the tendency to remember one’s choices as better than they were.” This one goes hand-in-hand with Hindsight Bias (“the tendency to see past events as predictable”). I think it’s a specific example of a tendency to romanticize the past. Conversely, I’m also susceptible to Optimism Bias, or “the tendency to overestimate favorable and pleasing outcomes.” And of course, there’s the Planning Fallacy, “the tendency to underestimate task-completion times.” I’m much more conscious of that one now though. It’s the little victories, right?

That’s not to say those are the only ones on my ledger. It’s likely that every single person has committed virtually every one of these errors at some point. Actually, the best use of those lists might be to really focus on the ones you can’t think of personal instances of, as maybe those are the examples your mind has most successfully hidden from you.

Becoming more aware of these all-too-human tendencies can be valuable both personally and professionally. It’s good practice to give others the benefit of the doubt (we’re all guilty of these things) and to question authority (we’re all guilty of these things), and it’s hard to think of a single occupation that wouldn’t be well served by a deeper understanding of human behavior. Salespeople, marketers, designers, product managers, CEOs — it would do each of us credit to look more closely at other people’s thinking and motivations, and especially at our own.

Posted on July 14, 2014.